A recent analysis by the IMF estimates that 40% of jobs globally, or 60% of jobs in advanced economies, will be impacted by the growing adoption of AI. How will it impact your role? In my opinion the surest way to secure your place in these transformed economies will be by including AI in the toolset you use to stay competitive.

Fortunately, those of us in the Drupal community can make use of not just one but a multitude of options for using AI as part of their site's capabilities. In this article we will explore two of these options, but also some of the skills you should consider developing, to best leverage AI to its maximum effect.

NOTE: this article will focus on AI-based text generation, though hopefully a future article will talk about creating prompts for image generation.

Basic terminology

Before we go any deeper, let's make sure we're aligned on the meaning of some of the language we'll be using:

Natural Language Processing (NLP) is the general term for being able to work with text provided in a conversational format, understand the intended meaning, and provide a relevant response.

Artificial Intelligence (AI) is a field of study in computer science that develops and studies intelligent machines, often by mimicking human responses, or automating sophisticated behaviour.

Machine Learning (ML) is a subset of AI which allows systems to act without explicit instructions, by leveraging statistical algorithms to extrapolate existing data to unknown use cases.

Deep Learning is a subset of ML, which uses artificial neural networks with representational learning, which allows them to develop and leverage their own means of classification and other feature detection.

A Large Language Model (LLM) is an AI algorithm that uses Deep Learning techniques to accomplish NLP tasks such as responding to unstructured user prompts. LLMs are trained on massive data sets, often gathered from the internet, but sometimes using more specialized data.

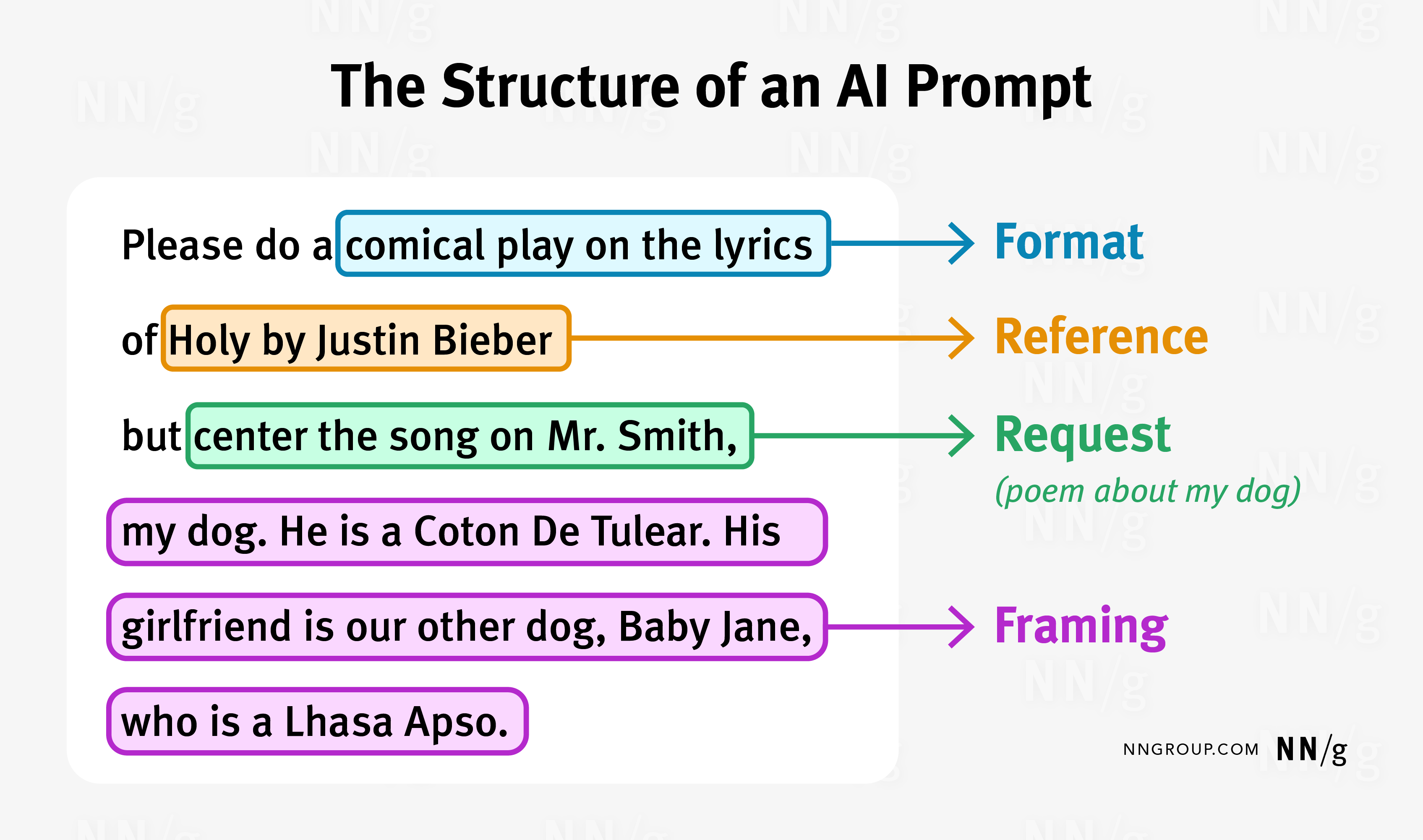

At its most basic level, a prompt is how you ask an AI model (typically, an LLM) for the output you want. This can be as simple as posing a question, similar to what you might put into the ChatGPT interface. You can better results, however, by carefully crafting your prompt to include elements that will help to ensure the model knows exactly what to include in its response.

The discipline of Prompt Engineering

With the recognition that how you ask an LLM is as important as what you ask, a new discipline of Prompt Engineering has emerged. In addition to a variety blogs and tutorials on the subject, there are a variety of courses available, including from providers like Coursera, Udemy, and others. I'll provide a very brief summary here on some best practices.

Basic elements:

- Request: What you want the response to contain

- Reference(s) to either previous bot answers or to external sources

- Format: the structure and/or style to use for the response

- Framing: Also referred to as Context. You can get better results by including information that will help the LLM understand the background of the request

Another source suggests a similar framework, with elements from most important to least:

- Task

- Context

- Exemplar

- Persona

- Format

- Tone

The key idea that they share is that you can improve the quality of the text being generated by tools like ChatGPT by providing additional elements. These additional elements aren't required, but including them when appropriate will help you get the best results. OpenAI also provides its own documentation for Prompt Engineering.

Prompt Engineering, AI, and Drupal

Drupal's AI integration is a perfect illustration of the power of open source. Within weeks of the launch of ChatGPT, Drupal had a working integration. This allowed Drupal sites to start exploring how generative AI could add value for their users.

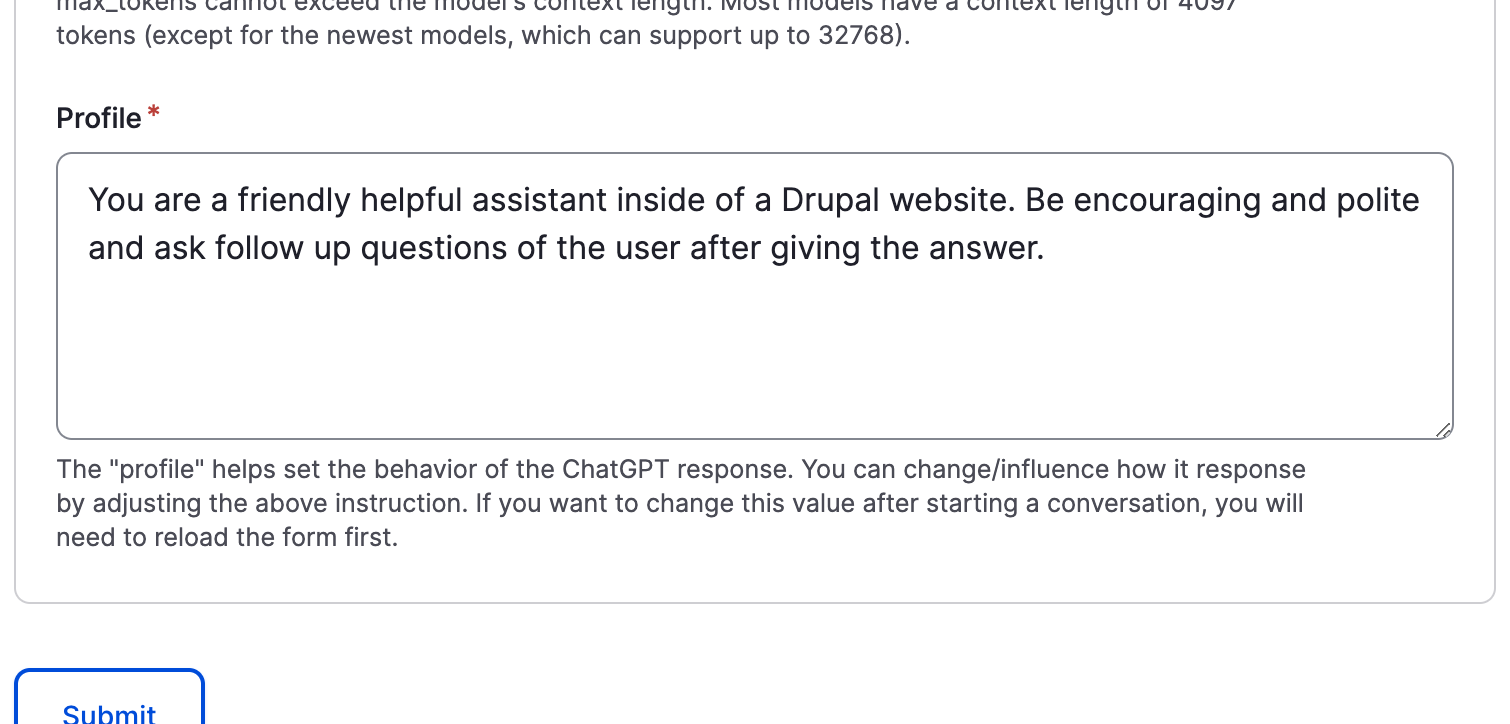

The OpenAI \ ChatGPT Integration module initially focused on adding a CKEditor dialog for posing a question, and the result from ChatGPT would be added to the WYSIWYG. Quickly new capabilities were added, including tools to analyze or modify content (for example, to change the tone). There are also backend interfaces you can use to query ChatGPT, and test the impact of settings like which model to use, temperature (which determines how much randomness to allow), and the maximum number of tokens to generate (the most amount of text to return, which in turn controls the price of running the query). One of these interfaces, the "OpenAI ChatGPT Explorer", also includes a Profile field you can use to influence the response.

This profile field is the closest a site builder will get to the more advanced aspects of prompt engineering with the OpenAI module, at least using the interfaces it ships with today. It's worth noting that the action OpenAI provides for ECA integration also includes a Profile field, which allows for more sophisticated processing of your site's content, in an automated way, and without writing code.

The beauty of the OpenAI set of modules is how much they are able to do "out of the box". Once you've added your API key and installed the submodules for whichever capabilities you want to use, you can start getting responses from ChatGPT pretty much right away. The most configuration you might need would be adding the CKEditor to whichever text formats your site is using.

But what if you wanted a way to use AI with Drupal that was more customizable?

Enter Augmentor AI

A few months ago I was doing some research for an article I was writing about Leveraging AI in Drupal websites, and I came across an inspiring session from DrupalSouth called AI Powered Drupal. Murray Woodman of the Morpht Drupal agency talked about a composable approach to using AI with Drupal.

He showcased the Augmentor AI module, which allows a site builder to add buttons to entity forms which, when clicked, can trigger AI-powered content generation in a variety of ways. It can generate content into your WYSIWYG from a simple prompt, similar to OpenAI. Or, it can generate tags based on the content of one or more other fields.

You can also generate multiple suggestions for a title, and present them to the content creator in a dropdown.

It was user interface that initially got me excited about Augmentor AI, but since I've started using it I've learned to appreciate its flexibility. At the heart of its setup is the use of augmentor configuration entities. Each augmentor includes a few elements:

- A label for easy reference

- A reference to an API key (using the Key module for storage)

- The model to use

- One or more "messages". Each message has a role ("User", "System", or "Assistant") and content. Typically the "system" role is for high level instructions, the "user" is for queries or prompts, and the "assistant" role is for the response, such as when providing examples

- An advanced settings section allows for setting the randomness with either temperature or Top P, Max Tokens, and more

It's worth noting here that different augmentor types may have somewhat different structures. An augmentor type is defined by a companion module, and generally defines the AI service that will be used, and which API. There are currently modules available to integrate with ChatGPT, Google Cloud Vision, AWS AI, and more.

You can define multiple augmentors for the same AI service, based on the ways you want to use it. Effectively, each augmenter can create the structure for a prompt, to achieve specific results. In the animated examples above, I had different augmentors defined to generate tags or title suggestions, even though they both leveraged ChatGPT. In the same way, I could have multiple augmentors available for CKEditor text generation, to create content with a certain tone or in a specific structure. Ever wanted a button that could generate a Shakepearean sonnet about a subject of your choosing?

If there's a down side to using Augmentor, it's the amount of work to set it up. In addition to installing the core module and submodules for the capabilities you need, you have to install a module that will provide an augmentor type for the AI provider you want to use, as well as the Key module. Once you've created a key for your API credentials, you can start putting your prompt engineering skills to work by creating one or more augmentors.

Next, you'll need to make your augmentors available to your content creators. For CKEditor this means adding the button and configuring which augmentors should be available. To add buttons to your forms, you need to add a field for each button, and then in the Manage form display tab choose which widget to use, set the source and target fields, specify the augmentor, and more. It can take a little trial and error to get working the way you want, but end result (in my experience) is something that works exactly the way you want. Brilliant.

Additional reading:

NNGroup: Prompt Structure in Conversations with Generative AI

Magical: How to Write Good AI Prompts: A Beginner’s Guide (+12 Templates)

SocialMediaToday - 12 ChatGPT Tone Modifiers To Improve Your AI-Generated Content [Infographic]

Forbes: 12 New Jobs For The Generative AI Era

Comments